Introduction

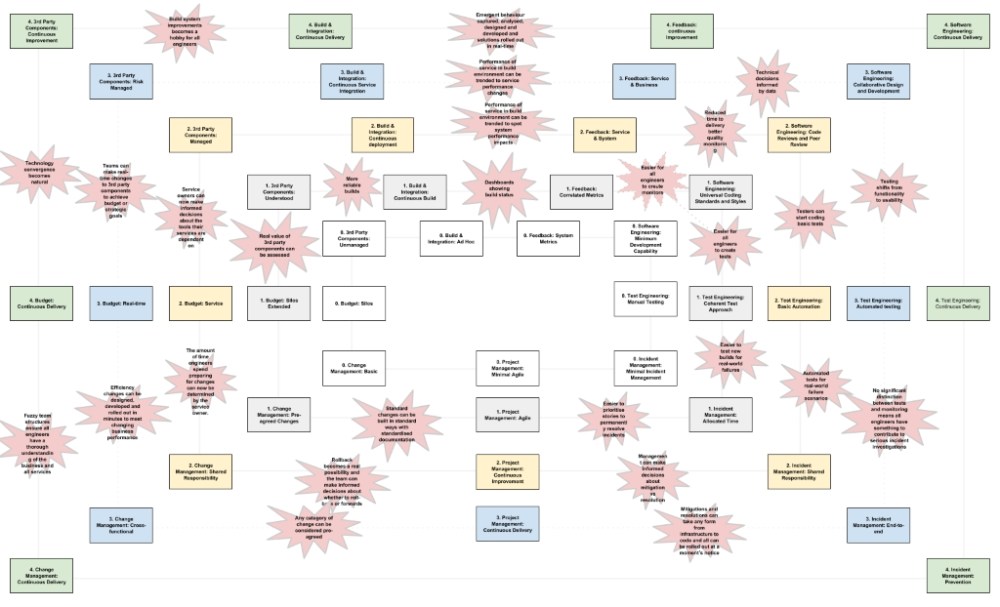

This is going to be part one in a two part series. In this article I’m gong to run a case study capability assessment using my newly published Next Gen DevOps Transformation Framework: https://github.com/grjsmith/NGDO-Transformation-Framework.

I’m going to use Playfish (as it was in August 2010 when I joined) as my target organisation. The reason for this is that Playfish no longer exists and there’s almost no one left at EA who would remember me so I’m not likely to be sued if I’m a bit too honest. I’m only going to review two capabilities, one that scores low and one that scores high otherwise this blog post will turn into a 20 page TL:DR fest.

Next week I’ll publish the second part of this series where I’ll discuss the recommendations the framework makes to help Playfish level it’s capabilities up and contrast that with what we actually did and talk about some of the reasons we made the choices we did and where those choices led us.

But first a little Context

Playfish made Facebook games that was just one of many things that made Playfish remarkable. Playfish had extraordinary vision, diversity, capability but coming from AOL the thing that most stood out for me was that all Playfish technology was underpinned by Platform-As-A-Service or Software-As-A-Service solutions.

Playfish’s games were really just complex web services. The game the player interacts with is a big Flash client that renders in the players browser. The game client records the player actions and sends them to the game server. The server then plays these interactions back against it’s rule set to first see if they are legal actions and then to record them in a database of game state. The game is asynchronous in that the client allows the player to do what they want and then validates the instructions. This meant that Playfish game sessions could survive quite variable ISP performance allowing Playfish to be successful in many countries around the world.

The game server then interacts with a bunch of other services to provide CRM, Billing, item delivery and other services. So we have a game client, game service, databases storing reference and game state data and a bunch of additional services providing back-office functions.

From a technical organisation perspective Playfish was split into 3 notional entities:

The games teams were in the Studio organisation. The games teams were cross-functional teams comprised of Producers, product-managers, client developers, artists and server developers all working together to produce a weekly game update.

The Engineering team developed the back-office services, there were two teams who split their time between something like 10 different services. The team weren’t pressured to provide weekly releases rather they developed additional features as needed.

Finally the Ops team managed the hosting of the games and services and all the other 3rd party services Playfish was dependant on like Google docs, JIRA Studio and a few other smaller services.

External to these organisation there were also marketing, data analysis, finance, payments and customer service teams.

Just before we dive in let me remind you that we’re assessing a company that released it’s first game in December 2007. When I joined it was really only 18 months old and had grown to around a 100 people so this was not a mature organisation.

Without further ado let’s dive into the framework and assess Playfish’s DevOps capabilities as they were in August 2010.

Build & Integration

We’ll choose to look at Build & Integration first as this was the first major problem I was asked to resolve when I took on the Operations Director role at Playfish.

Build and Integration: ad-hoc, Capability level 0, description:

Continuous build ad hoc and sporadically successful.

This seems like an accurate description of the Playfish I remember from 2010.

Capability level 0 observed behaviours are:

Only revenue generating applications are subject to automated build and test.

Automated builds fail frequently.

No clear ownership of build system capability, reliability and performance.

No clear ownership of 3rd-party components.

This isn’t a strict description of the state of Playfish’s build and integration capabilities.

The games and the back-office services all had automated build pipelines but there was only very limited automated testing. The individual game and service builds were fairly reliable but that was due in part to the lack of any sophisticated automated testing . The games teams struggled to ensure functionality with the back-office components. Playfish had only recently transitioned to multiple back-office services when I joined and was still suffering some of the transitional pains. There was no clear ownership of the build system. Some of the senior developers had set one up and began running it as an experiment but pretty soon everyone was using it, it was considered production but no-one had taken ownership of it. Hence when it under-performed everyone looked at everyone else. 3rd party components were well understood and well owned at Playfish. Things could have been a little more formal but at Playfish’s level of maturity it wasn’t strictly necessary.

Let’s take a look at the level 1 build and integration capabilities before we settle on a rating. Description:

Continuous build is reliable for revenue generating applications.

Observed behaviours:

Automated build and test activities are reliable in the build environment.

Deployment of applications to production environment is unreliable.

Software and test engineers concerned that system configuration is the root cause.

Automated build and test were not reliable. Deployment was unreliable and everyone was concerned that system configuration was to blame for the unreliability of deployments.

So in August 2010 Playfish’s Next Gen DevOps Transformation Framework Build & Integration capability was level 0.

Next we’ll look at 3rd Party Component management. I’m choosing this because Playfish was founded on the principles of using PAAS and SAAS solutions where possible so it should score highly but I suspect it will be interesting to see how.

3rd Party Component Management

Capability level 0 description:

An unknown number of 3rd party components, services and tools are in use.

This isn’t true Playfish didn’t really have enough legacy to lose track of it’s 3rd party components.

Capability level 0 behaviours are:

No clear ownership, budget or roadmap for each service, product or tool.

Notification of impacts are makeshift and motley causing regular interruptions and impacts to productivity.

All but one 3rd party provided service had clearly defined owners. Playfish had clear owners for the relationship with Facebook and the only tool in dispute was the automated build system. There were a variety of 3rd party libraries in use and these were never used from source so they never caused any surprises. While there were no clear owners for all of these libraries all the teams kept an eye on their development and there were regular emails about updates and changes.

There were no formal roadmaps for every product and tool but their use was constantly discussed.

So it’s doesn’t seem that Playfish was at level 0.

Capability level 1 description:

A trusted list of all 3rd party provided services, products and tools is available.

There was definitely no no list of all the 3rd party services, products and tools documented so it may be that Playfish should be considered t level 0 but let’s apply some common sense (required when using any framework) and take a look at the observed behaviours.

Capability level 1 observed behaviour:

Informed debate of the relative merits of each 3rd party component can now occur.

Outages still cause incidents and upgrades are still a surprise.

There was regular informed debate about the relative merits of almost all the 3rd party services, products and tools. No planned maintenance of 3rd party services, products or tools caused outages.

So while Playfish didn’t have a trusted list of all 3rd party provided services, products and tools they didn’t experience the problems that might be expected. This was due to the fact that it was a very young organisation with very little legacy and a very active and engaged workforce. If we don’t see the expected observed behaviour let’s move on to level 2.

Description for Capability level 2:

All 3rd party services, products and tools have a service owner.

While there was no list it was well understood who owned all but one of the 3rd party services, products and tools.

Capability level 2 observed behaviour:

Incidents caused by 3rd party services are now escalated to the provider and within the organisation.

There is organisation wide communication about the quality of each service and major milestones such as outages or upgrades are no longer a surprise.

There is no way to fully assess the potential impact of replacing a 3rd party component.

Incidents caused by 3rd party services, products and tools were well managed. There was organisation wide communication about the quality of 3rd party components and 3rd party upgrades and planned outages did not cause surprises. The 3rd party components in use were very well understood and debated about replacing 3rd party components were common. We even used Amazon’s cloud services carefully to ensure we could switch to other cloud providers should a better one emerge. We once deployed the entire stack and a game to Open Stack and it ran with minimal work (although this was much later). The use of different components were frequently debated and it wasn’t uncommon for us to use multiple alternative components on different infrastructure within the same game or service to see real-world performance differences first-hand.

So while Playfish didn’t meet the description of Capability Level 2 it’s behaviour exceeded those predicted in the observed behaviours.

Let’s take a look at Capability level 3:

Strategic roadmaps and budgets are available for all 3rd party services, products and tools.

There definitely weren’t roadmaps and budgets allocated for any of the 3rd party services. To be fair when I joined Playfish it didn’t really operate budgets.

Capability level 3 observed behaviour

Curated discussions supported by captured data take place regularly about the performance, capability and quality of each 3rd party service, product and tool. These discussions lead to published conclusions and actions.

Again Playfish’s management of 3rd party components doesn’t match the description but the observed behaviour does. Numerous experiments were performed assessing the performance of existing components in new circumstances or comparing new components in existing circumstances. Debates were common and occasionally resolved into experiments. Tactical decisions were made based on data gathered during these experiments.

Let’s move on to capability level 4:

Continuous Improvement

There was a degree of continuous improvement at Playfish but let’s take a look at the observed behaviours before we draw a conclusion:

3rd party components will be either active open-source projects that the organisation contributes to or they will be supplied by engaged, responsible and responsive partners

This description fairly accurately matches Playfish’s experience.

So in August 2010 Playfish’s 3rd Party Component Management capability was level 4.

It should be understood that Playfish was a business set up around the idea that 3rd party services, products and tools would be used as far as possible. It should also be remembered that at this stage the company was about 18 months old hence the behaviours were good even though it hadn’t taken any of the steps necessary to ensure good behaviour.

Conclusion

Using the Next Gen DevOps Transformation Framework to assess the DevOps capabilities of an organisation is a very simple exercise. With sufficient context it can be done in a few hours. If you want someone external to run the process it will take a little longer as they will have to observe your processes in action.

Look out for next week’s article when I’ll examine what the framework recommends to improve Playfish’s Build & Integration capabilities and contrast that with what we actually did.