Dune, by Frank Herbert, is an amazing book and an equally amazing David Lynch movie. It’s also a phenomenal management book and contains some valuable wisdom that we can apply to DevOps.

Dune, by Frank Herbert, is an amazing book and an equally amazing David Lynch movie. It’s also a phenomenal management book and contains some valuable wisdom that we can apply to DevOps.

In this article I’m going to pull out various quotes and thoughts from the characters in Dune and share with you some of the lessons I’ve taken from them.

One of the most powerful lessons I learned from Dune comes about halfway through the book. Just before a pivotal moment in the story, the lead character, Paul Atriedes’, reflects:

“Everything around him moved smoothly in the ancient routine that required no order.

‘Give as few orders as possible,’ his father had told him … once … long ago. ‘Once you’ve given orders on a subject, you must always give orders on that subject.’”

As a leader this advice has been invaluable to me. A team that understands the values of it’s leader and the behaviour he or she expects from them far outperforms a team who are given constant instruction and supervision.

Not only does this hold true for managing teams but also for DevOps. When infrastructure is considered as individual machines that need care and maintenance it encourages us to give constant orders to them. Permit this person to issue this command, archive this log at this time, execute this command etc… Considering infrastructure as a system composed of identical nodes encourages us to create behaviours that the system as a whole conforms to. This is modern configuration management in a nutshell. Any nodes that don’t comply are simply replaced when they display behaviour out of the ordinary. This makes it easier to manage all the different environments the same way making application behaviour more predictable this then reduces the time spent diagnosing why problems occur in one environment and not another which in turn reduces the time needed to implement new features.

What Paul’s father, the Duke Leto Atriedes knew, was that people, whose loyalty he had earned, respected his values and wanted to work according to his values. What I’ve learned is that systems whose behaviour is managed are more reliable and require considerably less maintenance than systems that are poked and prodded at.

Dune even goes so far as to offer us DevOps axioms:

Earlier in the book Paul is being tested by one of his mother’s teachers and she tells him:

“In politics, the tripod is the most unstable of all structures.”

She’s referring to the major structures of government that make up the world of Dune.

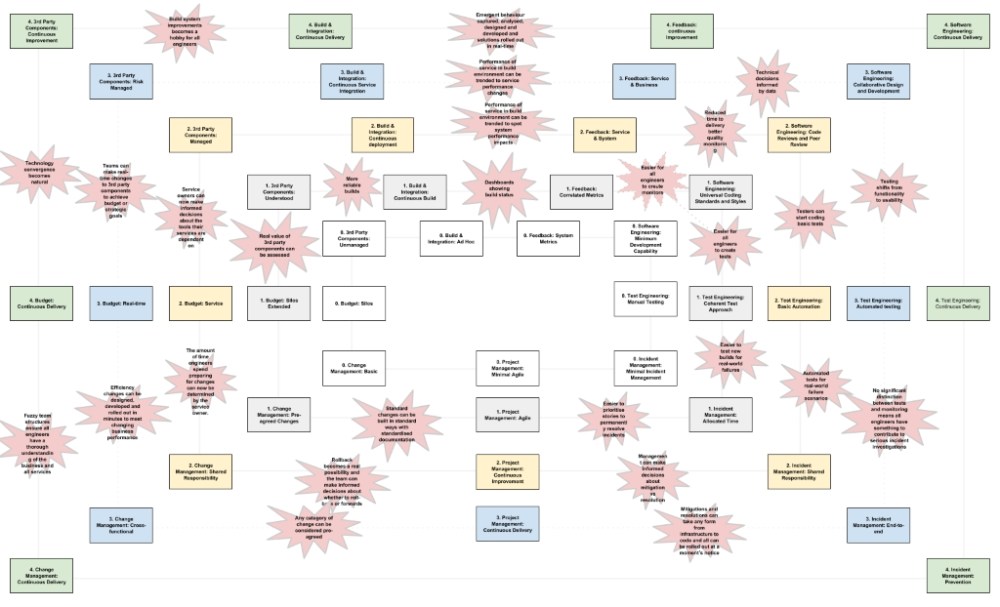

However there’s a lesson for us in Technology here too. The three great competing forces in a typical technology department are Development, Operations and Testing. Just as in Dune there can be no stable and productive arrangement of these three groups.

Development are incentivised to change and grow capability. Testing are at the whim of development but are often underfunded and unloved. Operations are incentivised by stability and predictability and often find take a cautious position that plays well to the needs of a test team always looking for more time. If testing receives funding then they form a power block with Development which besieges operations. So there can never be a stable productive accord between these three groups… While they remain three divided groups.

The lesson for us here is to not create these three structures in the first place or to break down the silos that constrain them if we have them. By creating product-centric service teams comprised of engineers from all the essential disciplines the team will have all the skills they need to build, launch, manage and support their service. This new type of team is motivated by the performance of their service not merely it’s infrastructure, it’s code or it’s conformance to predefined criteria.

There’s also some great practical advice for troubleshooting scaling problems. Early in the book Paul quotes the first law of Mentat. In Dune Mentat’s are human computers capable of processing data at incredible rates. The first law of Mental states:

“A process cannot be understood by stopping it. Understanding must move with the flow of the process, must join it and flow with it.”

Consider the data most technical teams have for troubleshooting. System metrics, log entries, transaction checkpoints. All static data indicating a single point in time and in this data we’re supposed to understand how processes are behaving. Now consider what happens when we collect, aggregate and trend this data over time and review states prior to, during and after incidents. We begin to truly understand process behaviour and the behaviour of the various systems those processes operate within. Now to be fair the Application Performance Management tools took this as their goal from the outset but these tools have only been around for a few years now and many organisations are still not investing properly in their monitoring services.

I’d like to leave you with one final message from Dune:

“Survival is the ability to swim in strange water.”

The water we swim in these days has become very strange. Applications have become services, services are comprised of micro-services, these services might run on platform services or infrastructure services that are themselves dependant on other services. We need DevOps if we’re going to survive in these strange waters.

Buy Dune on Amazon and while you’re at it you may as well buy my book too.

Featured image 77354890.jpg by Clarita on Morguefile.